UI Testing with Eclipse Jubula: Executing the Tests (3)

In my last blog post, everything was pure theory (if you're looking for the start of the Jubula series, look here): I specified a few tests, but there's no connection yet to any real application under test. Let's rectify this!

Before starting up Jubula today, look for the "AUT Agent" service and start it. The AUT Agent is our connection to the "real world". It takes commands from Jubula and executes them on the application under test (you can even run Jubula and the agent on different boxes for distributed testing). On Windows, you might have to start the agent via "Run as Administrator".

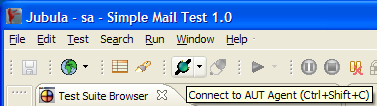

Next, start Jubula and open your project. Now, look for the "Connect to AUT Agent" toolbar button and press it in order to establish the connection to the agent we started before:

Next, start the test object via the "Start AUT" toolbar button. You should then see its ID listed in the "Running AUTs" view:

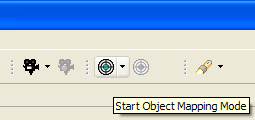

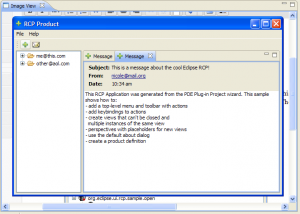

The application under test is running now, and you can view it on your screen. However, we still can't run any tests, as the logical components we specified last time are not yet mapped to their real component counterparts within the RPC application. Let's fix this by starting the "Object Mapping Mode", again from the toolbar:

Now activate the test object and move the mouse around within it. Notice the green borders around the various components? That's the Jubula Object Mapper showing you the components it has detected. In order to add any of these to your object mapping, you have to press Ctrl-Shift-Q.

You can collect all components at once like that if you want to, but I'd advice against it because it can get crowded and confusing quickly in Jubula when you do that. Instead, start with the few components we currently require for our tests to run. In my case, this would mean capturing the mail icon in the toolbar, and - after clicking it - the dialog message text and the dialog "Ok" button.

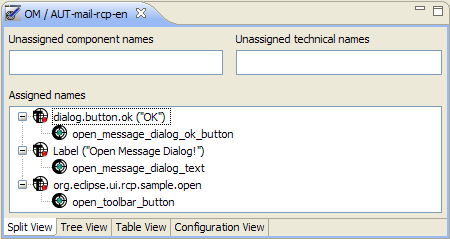

When done, switch back to Jubula. You should see a new editor called "OM /

All that's left to do now is to map the logical to the technical component names. You can do this by dragging each logical component name over the respective technical one and drop it there. The editor will change into this:

Aaand... - we're done with the object mapping. Your "Problems" view should be empty. This means that we can run our tests, at last! - Ok, nearly. First, we have to stop the object mapping mode again. I'm sure you'll find the respective toolbar button by yourself. :)

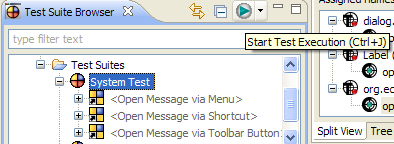

Select your "System Test" test suite in the "Test Suite Browser" view and run it:

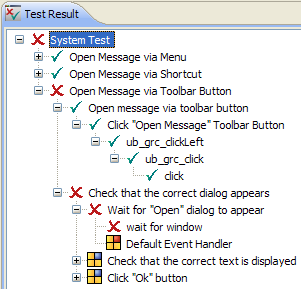

Hopefully, your automated tests will run now. - You can switch into the Test Execution afterwards and check the results of the test run:

Oops, my tests failed. What's the matter?

I'll go check the screenshot Jubula captured when the "wait for window" action failed. It shows a second message tab being open, and zero "Open Message" dialogs. Hmmm...

Oh right, I accidentally mapped the wrong toolbar button to my logical "Open Message" component... - I should have mapped the mail button to it, instead of the green plus sign.

I'll correct that (for some reason, I had to restart Jubula before it accepted my fixed object mapping), and maybe you've got a few things to correct, too, and then we're done!

You'll notice that there will always be some debugging of your test specifications going on here, unfortunately, but that's to be expected: Specifying tests is like developing in a lot of ways. Also, finding out what exactly made your tests fail can sometimes be harder than it looks, screenshots or not.

Alright, let's summarize what we've achieved up to here:

- In the first part of my blog series, there was some configuration to do in order to get the application under test to cooperate with Jubula. A one time effort.

- In the second part, we specified a few tests. This is the part you'll have to put the most effort into. You absolutely want to get your test specifications right: Clear, concise, complete, robust, maintainable. This is always difficult to get right. However, in my humble opinion, Jubula gives you a lot of good tools for structuring and refactoring your test specifications to simplify this.

- In the third part, we mapped the components and executed the tests. Yay!

Before using Jubula in a real-world project, there's still a lot of things to clarify, of course, like:

- What kind of database should you use with Jubula (it defaults to an embedded H2)?

- How well does it cope with multiple team members specifying tests at the same time?

- How to integrate it into an Ant/Maven/SBT/Gradle/Buildr/whatever build?

- How to run it on an Continuous Integration server?

- How well do the exported test specifications play together with version control systems?

- ... and probably more stuff like that...